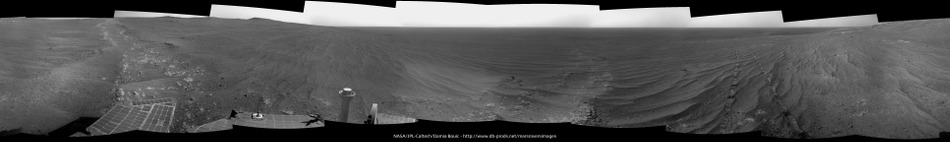

and the Navcam L0 view on Sol 3837.

Jan van Driel

Click to view attachment

Full Version: Cape Tribulation

The Navcam L0 panoramic view taken on Sol 3837 and Sol 3838 and stitched together.

Jan van Driel

Click to view attachment

Jan van Driel

Click to view attachment

A quick circular view of the 3840 location - I am not confident enough in the location to map it yet.

Phil

Click to view attachment

Phil

Click to view attachment

Looks like wind cleans this area very very well! With a little luck Opportunity will also experience some cleaning on this ridge.

Is that the actual summit of Cape Tribulation visible on the right here?

http://qt.exploratorium.edu/mars/opportuni...C7P1932L0M1.JPG

http://qt.exploratorium.edu/mars/opportuni...C7P1932L0M1.JPG

Is that the actual summit of Cape Tribulation visible on the right here?

Looking at Phil's new location and this proposed route map I think it probably is the summit - there's nothing else between us and the summit that could look that dramatic.

The exposed outcrop just to our east is marked as "science opportunity: crest approach" on that map, so there's a chance we'll stop for a look...

Yes, thanks everybody. It's nice to reconnect to the wider context around Endeavour now that we're up high. I am (pleasantly) surprised that we can see as far as 60 km just now. Fingers crossed for continued dust clearance and a glimpse of the Miyamoto rim.

A circular view of Damia's panorama - showing the fracture very nicely. Thanks for making the panorama, Damia!

Phil

Click to view attachment

Phil

Click to view attachment

Can anyone construct (or link to) a good contour map?

Much of the close terrain is quite gentle here; that makes it pretty difficult to keep track of the gain in altitude just by casual observation of landmarks.

Much of the close terrain is quite gentle here; that makes it pretty difficult to keep track of the gain in altitude just by casual observation of landmarks.

Fascinating stuff. A suggestion for admins: Maybe it's worth splitting recent posts off into a new thread, something along the lines of 'Distant Vistas II, the view from Cape Tribulation'? There's bound to be more of this as we approach the summit. (If that's done feel free to delete this post.)

Good Idea, done. - Mod

Distant Vistas 2 - The view from Cape Tribulation

Good Idea, done. - Mod

Distant Vistas 2 - The view from Cape Tribulation

Sol 3855 pancam F2:

Click to view attachment

Click to view attachment

All the links originating from the "Return to Mars" webpage http://www.exploratorium.edu/mars/spiritopp.php and leading to our favorite MER images such as :

http://qt.exploratorium.edu/mars/opportunity/pancam/

or : http://qt.exploratorium.edu/mars/opportunity/navcam/)

are BROKEN since last Sunday.

I just sent an e-mail to their Webmaster. I hope that they will be mended soon

http://qt.exploratorium.edu/mars/opportunity/pancam/

or : http://qt.exploratorium.edu/mars/opportunity/navcam/)

are BROKEN since last Sunday.

I just sent an e-mail to their Webmaster. I hope that they will be mended soon

Could this have anything to do with the latest cutbacks at NASA where the JSC Media Center has been closed permanently and all images from various NASA projects are now being posted only to Instagram and Facebook?

I know this information comes more from the JPL side of the farm and not JSC, obviously, and this particular website is the Exploratorium's page (not directly a NASA website) -- but perhaps they have lost all links to the image downloads due to media cutbacks?

Just wondering.

-the other Doug (With my shield, not yet upon it)

I know this information comes more from the JPL side of the farm and not JSC, obviously, and this particular website is the Exploratorium's page (not directly a NASA website) -- but perhaps they have lost all links to the image downloads due to media cutbacks?

Just wondering.

-the other Doug (With my shield, not yet upon it)

perhaps they have lost all links to the image downloads due to media cutbacks?

If something like that happened I'd guess exploratorium just wouldn't be updated, like we have seen before. But it's actually down, which suggests a server problem with exploratorium.

More flash problems, and plans to reformat again from the latest PS update:

QUOTE

she went into crippled mode on Sol 3857 (November 29, 2014) and again later it appears on Sol 3858... "We appear to have gone into permanent crippled mode," Nelson reported. "In this mode, a problem with the Flash memory prevents creation of a Flash drive. The flight software... then creates a RAM drive. The RAM drive is both smaller, about 1/10th the size of the Flash drive, and volatile, so all contents are lost whenever the rover naps or DeepSleeps."

QUOTE

another reformat "may do the trick," he said. "Even if we were forced to operate only in crippled mode, we could manage," he added. "It gates how fast we take data since we cant store it for later transmission, but we would still have an active mission."

QUOTE

As of post-time, the ops team was preparing a plan for a pre-review Monday morning, December 1st, with hopes for a review and approval to initiate a reformat before the first week of December is out.

You covered that well fredk.

I was about to say that our intrepid rover have major problems with the flash memory.

Years back there were some speculation of how long Opportunity would last and what systems that would fail, and the flash memory were one of them.

Now all is not lost, they state it might be able to continue the mission even in 'crippled mode'.

The last word not said yet though, lets wait and see how this latest reformat turn out.

I was about to say that our intrepid rover have major problems with the flash memory.

Years back there were some speculation of how long Opportunity would last and what systems that would fail, and the flash memory were one of them.

Now all is not lost, they state it might be able to continue the mission even in 'crippled mode'.

The last word not said yet though, lets wait and see how this latest reformat turn out.

I'm curious how much this problem resembles the one they had on Mars Express?

It bugs me that flash is the cause of all this headache. Not that flash isn't terrible complex internally, but because it should be reliable as heck regardless.

Oh well. Reminds me in a way of Galileo's sticky tape recorder.

Oh well. Reminds me in a way of Galileo's sticky tape recorder.

'Reliable as heck'

3800+ Sols into a 90 sol mission despite the harsh temperature cycles and radiation environment on Mars?

I would call that the epitome of 'Reliable as heck'

3800+ Sols into a 90 sol mission despite the harsh temperature cycles and radiation environment on Mars?

I would call that the epitome of 'Reliable as heck'

Flash memory usually has a limited number of rewrite cycles per memory block. So it isn't really surprising, that after more than 10 years of intense use, some of the blocks go bad.

It bugs me that flash is the cause of all this headache. Not that flash isn't terrible complex internally, but because it should be reliable as heck regardless.

If you had bought a commercial flash card in 2002 and used it on Earth as heavily as the flash on MER has been used, it would most likely be non-functional by now.

Testing how the flash degrades over time and validating one's flash management code when, say, half the flash blocks have failed, is quite a job. I wouldn't have done it for the short MER mission, so it's great that it's worked as well as it has.

That said, I'd be curious to know the root cause of the problems so they can be mitigated in the future if possible.

..............That said, I'd be curious to know the root cause of the problems so they can be mitigated in the future if possible.

Yes, I got one flash stick right beside me that only have been used for the transfer of files, no windows boost or whatever, and it did wear out in a handful of years despite it has been used in our even temperatures and low background radiation here on Earth. And the flash on one of my 2 cameras used in fieldwork even in a shorter time span than that - lots of write cycles on that one but quite some various temperatures that one had operated in -30 to +40 C. So even though it's consumer grade of course, it do show to me that temperature matters for a flash memory.

So there's simply not a single root cause we can point finger at, its a combination of radiation, temperature swings and read cycles - and a lot of the latter not only our treat of many images at each site, but I guess there have been a lot of householding data, communication session, driving paths and manoeuvres and scientific data that get written sent and then overwritten on a daily basis.

I'd rather give the engineers a double thumbs up for amazing engineering, and another for the fact that there's a contingency plan. So I am with djellison here, the flash memory of Opportunity have triumphed over any expectation by far.

And as a sidenote: Merry X-mas to all on the forum. (Said on advance since I will be extremely busy with other things now.)

The Exploratorium MER images links are back !

It looks like new server/software at exploratorium - the sort links are much faster now.

Meanwhile, there's been a recalibration of tau (as happens periodically) - tau values are now substantially lower than the previous estimates and more in line with previous years. This is good news for visibility from the summit. I've been secretly hoping we spend a lot of time doing science here at the fractures so tau has time to drop more before we reach the summit...

Meanwhile, there's been a recalibration of tau (as happens periodically) - tau values are now substantially lower than the previous estimates and more in line with previous years. This is good news for visibility from the summit. I've been secretly hoping we spend a lot of time doing science here at the fractures so tau has time to drop more before we reach the summit...

If you had bought a commercial flash card in 2002 and used it on Earth as heavily as the flash on MER has been used, it would most likely be non-functional by now.

Testing how the flash degrades over time and validating one's flash management code when, say, half the flash blocks have failed, is quite a job. I wouldn't have done it for the short MER mission, so it's great that it's worked as well as it has.

That said, I'd be curious to know the root cause of the problems so they can be mitigated in the future if possible.

Testing how the flash degrades over time and validating one's flash management code when, say, half the flash blocks have failed, is quite a job. I wouldn't have done it for the short MER mission, so it's great that it's worked as well as it has.

That said, I'd be curious to know the root cause of the problems so they can be mitigated in the future if possible.

Unsure about that. Write frequency per physical wordline might still be higher on Earth, even with seemingly lower utilization. And if it were simply a high utilization issue, I tend to believe it'd be working surprisingly well as the whole flash architecture is geared towards dealing with a statistical tail of a few bits failing, and continuing to function. And that sort of design leaves a lot of actual overhead. I just do not expect the flash to be the crappiest chip on the board if being old is the only hurdle to jump.

I once participated in what was basically a design review of a flash memory targeted at a 130nm RF process, and the whole system's designed around the flash cells alone being terribly unreliable, in that they fail way too often. They over-test, anneal, repair, throw out bad cells they find at test (they may have had extra wordlines in each sector and an entire extra sector to repair with), then throw ECC on top of memories that test perfect, assuming that some outlier single bits will continue to fail throughout the life of the memory so the ECC *should* be able to repair everything statistically expected for the spec life of the memory.

And for what it's worth, the physical addresses of the sectors have no correspondence to the logical addresses of those sectors, even after test (internally there's an entire spare sector so that any can be marked bad, and after that the logical-to-physical mapping pingpongs around with a write balancing method of some sort right within the memory, on top of whatever firmware *thinks* it's doing... and I've always wondered how well the software write-balancing understands what the circuit designers put in.) And so if they're trying to debug some sort of problem with a physical address (and they are) and have to trace through at least 2 tiers of logical-to-physical address obfuscation... yuck. But that said, there's a lot of overhead in the reliability design of the actual memory cells that if it's still truly bad address problem, that there should be a fat part of the distribution that is still working fine.

So *I* guess they're probably dealing with something other than a bad address problem, and that's a pity because the bits are still working, but you can't write and read them because of a problem in the pipe. I think some people jump to the conclusion that because it's flash, and flash cells truly have a limited life, that this has to be that sort of issue. My comment is that if it truly is that sort of issue, that the memory shouldn't be as crippled as recently described. It should be in the tail of the distribution of single-bit failures, not wholesale unusability, even more than 10 years into the chip's life.

The flipside is that the radiation environment just shifted the bell curve, and they're now into the fat lot of bit failures that the chip's devs would've expected sometime in the far future. And that the overhead's all gone. *That* would be important to know thoroughly.

Can anyone here make sense of this? To me it looks like a rock that has been fluidised and deformed, possibly by an impact shock.

http://qt.exploratorium.edu/mars/opportuni...00P2303L2M1.JPG

http://qt.exploratorium.edu/mars/opportuni...00P2303L2M1.JPG

That's an image from Sol 1. Landing day. It's airbag scrape marks in the dirt.

Look at the date on the URL. 2004-01-25

2004.

It's a single frame from this Mosaic

http://mars.nasa.gov/mer/gallery/press/opp...stcard_part.jpg

Look at the date on the URL. 2004-01-25

2004.

It's a single frame from this Mosaic

http://mars.nasa.gov/mer/gallery/press/opp...stcard_part.jpg

OK, then ptease delete the post, and this one. But I found that image at Exploratorium labelled with a 2014 date. Is it a temporary problem with Exploratorium? (If the answer's obvious to everyone else and I'm not getting it please move to PMs)

http://qt.exploratorium.edu/mars/opportunity/pancam/2004-01-25/1P128287268EFF0000P2303L2M1.JPG

Im sure you're right about the image, but why do I find it on today's page at exploratorium? How do I identify the genuinely new ones?

It happens. Sometimes there are pipeline burps and old images appear. Not every image that appears in Exploratorium is 'new'

Only way to be sure is to check the site ID ( in that image it's 0000... currently Opportunity is at CJL1 )

Or take the file name and run it thru something like this : http://www.greuti.ch/oppy/html/filenames_ltst.htm

Only way to be sure is to check the site ID ( in that image it's 0000... currently Opportunity is at CJL1 )

Or take the file name and run it thru something like this : http://www.greuti.ch/oppy/html/filenames_ltst.htm

It's likely that for whatever the problem was either a new server or failed hard drive, they had to re-add all the images to the /opportunity/pancam directory that were taken throughout the entire mission and thus will give you the last modified date of 2014-12-5 with all of the directory's, even back with images taken in 2004. So yeah, do what Doug suggested for identifying them. I use that MER file name website all the time.

... Or you could use the Midnight Planets page, which knows what images are new, what sol they're from, what site they're from, etc, etc. Heck, right now it's even showing images that haven't made it to Exploratorium or the JPL raw images site yet. I'm going to pretend that's a feature.

Obviously something strange is up with Exploratorium right now, I have no idea what. I hope it comes back.

Obviously something strange is up with Exploratorium right now, I have no idea what. I hope it comes back.

Reformat was planned for 3862 according to the latest update.

I'd be surprised if it was on 3862, Oppy was quite active that sol. Maybe 3865, there is nothing in the tracking database for that one.

EDIT. Furthermore, the tracking data says that on 3865 there was some old data sent down (from 3828) which if correct (and I understand this properly) implies that the flash hadn't been reformatted and was successfully mounted to enable access. Curious.

EDIT. Furthermore, the tracking data says that on 3865 there was some old data sent down (from 3828) which if correct (and I understand this properly) implies that the flash hadn't been reformatted and was successfully mounted to enable access. Curious.

The project prepared for a reformatting of the flash memory on Sol 3862 (Dec. 4, 2014).

This *could* be read as the date that the reformat planning took places was 3862, implying that the actual reformat would take place after that.

This *could* be read as the date that the reformat planning took places was 3862, implying that the actual reformat would take place after that.

Don't over-interpret the database. Putting data in some shiny new flash is not inconceivable. And if you track down the 'new' old products that sol, or from prior sols in crippled mode, you can find that they did not increase the number or completeness of products on the ground. Sometimes things just show up there: I do not know if it is reprocessing on the ground, retransmitting from an orbiter, or something else.

Today's surprise

1. What new EDRs from ANY sol were received on sol 3868?

Number of EDRs received by sol, sequence number, and image type:

Sol Seq.Ver ETH ESF EDN EFF ERP Tot Description

----- -------- --- --- --- --- --- ---- -----------

03868 p1211.03 2 0 0 2 0 4 ultimate_front_haz_1_bpp_pri_15

03868 p1305.07 2 0 0 2 0 4 rear_haz_penultimate_0.5bpp_pri17

03868 p1311.07 2 0 0 2 0 4 rear_haz_ultimate_1_bpp_crit15

03868 p1741.03 2 0 0 2 0 4 navcam_1x1_az_252_3_bpp

03868 p1944.07 6 0 0 6 0 12 navcam_3x1_az_162_3_bpp

03868 p2433.35 4 0 0 4 2 10 pancam_Drive_Direction_2x1_L7R1

03868 p2554.35 13 13 0 0 2 28 pancam_Calera_LocustFork_Cottondale_L234567Rall

03868 p2601.05 4 2 0 0 2 8 pancam_tau_L78R48

Total 42 18 4 25 8 97

1. What new EDRs from ANY sol were received on sol 3868?

Number of EDRs received by sol, sequence number, and image type:

Sol Seq.Ver ETH ESF EDN EFF ERP Tot Description

----- -------- --- --- --- --- --- ---- -----------

03868 p1211.03 2 0 0 2 0 4 ultimate_front_haz_1_bpp_pri_15

03868 p1305.07 2 0 0 2 0 4 rear_haz_penultimate_0.5bpp_pri17

03868 p1311.07 2 0 0 2 0 4 rear_haz_ultimate_1_bpp_crit15

03868 p1741.03 2 0 0 2 0 4 navcam_1x1_az_252_3_bpp

03868 p1944.07 6 0 0 6 0 12 navcam_3x1_az_162_3_bpp

03868 p2433.35 4 0 0 4 2 10 pancam_Drive_Direction_2x1_L7R1

03868 p2554.35 13 13 0 0 2 28 pancam_Calera_LocustFork_Cottondale_L234567Rall

03868 p2601.05 4 2 0 0 2 8 pancam_tau_L78R48

Total 42 18 4 25 8 97

From the latest update:

A few more details in the release.

QUOTE

the project reformatted the rover's Flash memory on Sol 3862 (Dec. 4, 2014). Although the rover's operation improved immediately after the reformat, Flash behavior quickly deteriorated. Opportunity experienced a set of resets on Sols 3864 and 3865... After this, the project made the decision to operate the rover without the use of Flash memory until another fix can be implemented. On Sol 3866... the rover was booted without using Flash (and instead storing data products in volatile RAM memory) and all the fault conditions were cleared. On Sol 3867 (Dec. 9, 2014), Opportunity performed light science activities in preparation for driving on the next sol. Longer term, the project is developing a strategy to mask off the troubled sector of Flash and resume using the remainder of the Flash file system.

A few more details in the release.

You beat me to it again FredK. =)

The short version: One bank making up for 1/7 of the flash memory have been deemed bad. So the hope is to get the remaining flash memory up and working and if all go well Opportunity might very well get back to normal operation - though this RAM only mode appear to work so its a god engineering test if nothing else.

The short version: One bank making up for 1/7 of the flash memory have been deemed bad. So the hope is to get the remaining flash memory up and working and if all go well Opportunity might very well get back to normal operation - though this RAM only mode appear to work so its a god engineering test if nothing else.

This is a "lo-fi" version of our main content. To view the full version with more information, formatting and images, please click here.