Full Version: Robot Arm - Observations and Excavations

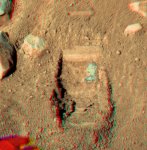

Wow, looks like near the "ice" already!

If that's ice, it looks awfully easy to scrape off, no? Makes me rethink the whole exposed-ice-under-the-lander idea.

Looks like a small dry powdery deposit of some sort to me - ice isn't whiter than white like that.

Doug

Doug

I don't know, the color in the images is quite lighter than the official releases, maybe it isn't so white after all... and that blueish/blackish rim surrounding the white patch looks like dirty ice to my much untrained eye.

I don't think it's so easy to dig, it just looks scraped.

I don't think it's so easy to dig, it just looks scraped.

It just reminds me more of the Tyrone / Silica Valley / Paso Robles type desposits at Gusev more than the ice we see under the lander. Then gain, the more I look at it, the more it looks like the top of a 'layer' of some sort, which just has to be the ice. We'll know soon enough - that's the fun with exploration

Doug

Doug

Guess the next digging probe should it already prove.

If there is somewhere pure ice, wouldn't it be much deeper in the ground, resulting from higher weight/compression?

The thrusters probably melted that layer slightly.

If there is somewhere pure ice, wouldn't it be much deeper in the ground, resulting from higher weight/compression?

The thrusters probably melted that layer slightly.

It's damn hard to infer color properties from overexposed raw data. Whatever it is, it looks much darker in longer wavelength filters. I'd expect ice to be near uniformly reflective at wavelengths less than 1 micron and to be the brightest stuff in the scene. The filtered images show that there are otherwise grayish rocks that appear brighter in the red spectrum, while at the blue end the stuff is much more reflective (apart from lander deck, it's by far the brightest stuff and it's still overexposed).

If I'd hazard a wild guess, I'd say this stuff might actually turn out blue-greenish in natural color once exposures are adjusted.

If I'd hazard a wild guess, I'd say this stuff might actually turn out blue-greenish in natural color once exposures are adjusted.

Guess the next digging probe should it already prove.

If there is somewhere pure ice, wouldn't it be much deeper in the ground, resulting from higher weight/compression?

The thrusters probably melted that layer slightly.

If there is somewhere pure ice, wouldn't it be much deeper in the ground, resulting from higher weight/compression?

The thrusters probably melted that layer slightly.

The thruster could also have removed the "white layer" (if this is not ice) and get to the ice then. In this theory, the white layer would have spread all over the place.

Occam's Razor says it is ice.

Here is the trench with some zoom and a bit of resampling (screen shot from Stereo Photo Maker).

There doesn't appear to be any depth to the whitish area, which if it were the top layer of an underground ice layer seems strange. More like something lying on the bottom of the first trench. Ok I know there are no liquids at this temp and pressure but the image is suggestive of a fluid flow that froze??

Yes I do know that is supposed to be impossible but hey this is Mars and this may be the first up close contact with Martian brine / salts / minerals / acid or whatever that blue green stuff its made of.

Click to view attachment

PS, that strange pattern I noticed on the right lower corner of the first rear dig wall has reduced in size and lost it's whitish core??

http://www.unmannedspaceflight.com/index.p...st&id=14544

There doesn't appear to be any depth to the whitish area, which if it were the top layer of an underground ice layer seems strange. More like something lying on the bottom of the first trench. Ok I know there are no liquids at this temp and pressure but the image is suggestive of a fluid flow that froze??

Yes I do know that is supposed to be impossible but hey this is Mars and this may be the first up close contact with Martian brine / salts / minerals / acid or whatever that blue green stuff its made of.

Click to view attachment

PS, that strange pattern I noticed on the right lower corner of the first rear dig wall has reduced in size and lost it's whitish core??

http://www.unmannedspaceflight.com/index.p...st&id=14544

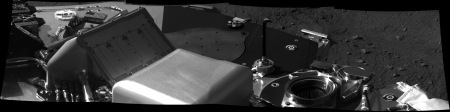

Thanks for the images, all. I see they decided to dump the latest dig onto the smooth track left by the sliding/rolling rock.

Ant, is there any chance you could post the colour parallel eye version at full resolution (or the two separate full-resolution colour frames)? With stereo photomaker, we can view in stereo no matter how large the image. Thanks!

Ant, is there any chance you could post the colour parallel eye version at full resolution (or the two separate full-resolution colour frames)? With stereo photomaker, we can view in stereo no matter how large the image. Thanks!

Yes, I can do that Fredk

Hail Ants,

With respect, you have the left and right eyes reversed. The trench comes out a hill. BTW I like the blinker. Always a goodie and very informative.

The trench comes out a hill. BTW I like the blinker. Always a goodie and very informative.

Greg

With respect, you have the left and right eyes reversed.

Greg

Greg : it's parallel, not crossed eyes (or maybe I ever made a confusion...  ).

).

Ants,

When I copied and pasted your image into Stereo Photo Maker (as a side by side stereo image) and swapped the left and right images, the cross eyed 3d effect was perfect. BTW what is parallel? Aren't the images left and right as taken by the stereo camera? But hey, I'm not an expert, just learning from them.

Greg

When I copied and pasted your image into Stereo Photo Maker (as a side by side stereo image) and swapped the left and right images, the cross eyed 3d effect was perfect. BTW what is parallel? Aren't the images left and right as taken by the stereo camera? But hey, I'm not an expert, just learning from them.

Greg

parallel is when you focus behind the images, cross-eye is when you focus in front of the images. I always found it easier to do cross-eyes, have a hard time making myself focus at infinity when there's a computer screen just in front of my nose..

See what is the Phoenix work area, the RA reach Click here

parallel is when you focus behind the images, cross-eye is when you focus in front of the images. I always found it easier to do cross-eyes, have a hard time making myself focus at infinity when there's a computer screen just in front of my nose..

Beyond a certain point, it's impossible to do parallel. Cross-eyes works pretty much no matter how much displacement there is between the two images.

Looks like a small dry powdery deposit of some sort to me - ice isn't whiter than white like that.

Doug

Doug

Can it be silica?

http://www.nasa.gov/mission_pages/mer/mer-20070521.html

At a recent press conference one of the panel thought it was unlikely to be silica based on the geological context (no evidence for volcanism or geothermal activity at the Phoenix site). I'd add that, apart from a few white bits in the first scoop, there's no sign that the white stuff was moved around by the scoop, and I'd expect a powder to get easily redistributed in the trench by the scoop. As far as the brightness, it's hard to say - on our planet, ice can vary quite a bit in its brightness. It's worth keeping in mind that the albedo of Martian soil is typically quite low (really it should be called brown rather than orange), so ice could apear much brighter than the soil.

Here's quick look at the sol 11 "Baby Bear" scoop, from the images that are down so far.

Context of the scoop

Context of the scoop

This was discussed in yesterdays press conference, and the answer is that silica would be completely unexpected in this environment.

I can understand that viewpoint, but on the other hand, wasnt the silica that was discovered by MER

completely unexpected there too ?

Ice?

What are you comparing - same filter, different times, or same time, different filters? Can make a big difference.

Airbag

Airbag

Ahhh - Your correct, it's just a filter change. I should have looked at the time stamps first. It had the looks

of a depression left by sublimed ice. The white area must be overexposed giving it the appearance that the

depression is filled.

Has anybody commented on what appears to be the obvious strata in the rear (distal) wall of of Dodo Trench? [For reference, a good example (among many) appears in the June 3 blog by AJS Rayl in the Planetary News: Phoenix (2008) Phoenix Scoops Mars and Digs It--in this instance the site is called the Knave of Hearts; I surmise to distinguish it from the trench itself.] I've searched in vain for comments on the strata. If these are "Mars-annual", it is hard to understand how the "varves" can form underneath the apparent topsoil/dust layer.

Occam's Razor fails on Mars because our simplist explanations are based upon terrestrial experience.

But Odyssey data indicates a major source of hydrogen in the immediate subsurface. And CO2 (which doesn't have hydrogen anyway) is a gas at this pressure/temperature. So even operating on martian data, I'd agree that Occam's Razor falls hard on the side of this being (largely) water ice. We don't yet have absolute proof, but I'd say we're well in excess of 90% confidence that this is water ice at some significant degree of concentration. (Perhaps salty or acidic.)

Well, speaking as an amateur myself, I've always wondered about the electrostatic properties of Martian dust. Are the clods sticking together because of this?

On this subject of electrostatic process - not sure if arm can reach but would be interesting to see if particles ended up being stuck to any underside flat surfaces

underneath the lander and see if over a period of time any such particles drop off the underneath - might give some insite into electrostatic properties or other 'sticking' mechanisms (so far the under-lander images have only shown ground underneath lander and the underside itself is tantalisingly out of view at the top of image apart from the descent thrusters)

Also switching to Mars Rovers - can the rover's robotic arm look on any underside surfaces of the rover with the MI - to get close-up of any dust sticking to under surface of lander or lander solar arrays ???

But Odyssey data indicates a major source of hydrogen in the immediate subsurface.

...but the Odyssey GRS apparently has a resolution of 300km, so presumably localised concentrations of "other stuff" (e.g. kieserite) aren't ruled out. My uninformed personal opinion was that the scoop's hitting small patches of salts, but that Holy Cow is ice, based purely on visual appearance... and then DnD 2 changed my mind. Er, I think. (Who knows? It's just nice to know that TEGA will give us an answer in a few sols' time

That said, I suppose if TEGA sees both ice and salts, we could be debating what this or that particular whitish patch is for a long time to come.

would be interesting to see if particles ended up being stuck to any underside flat surfaces underneath the lander and see if over a period of time any such particles drop off the underneath - might give some insite into electrostatic properties or other 'sticking' mechanisms

The MECA microscope will actually be doing a bit of this. In addition to the "sticky" silicone substrate that we've seen so far, there are sixty-nine different substrates on the wheel. By seeing how much the dirt sticks to the various substrates as it is rotated from horizontal to vertical, a lot can be learned about the electrostatic (and magnetic) properties of the soil.

On this subject of electrostatic process - not sure if arm can reach but would be interesting to see if particles ended up being stuck to any underside flat surfaces underneath the lander

I don't think this is what you had in mind, but we can see what I think are some essentially vertical surfaces that were coated with light-coloured splotches during landing (see white arrows in image below). Most of those splotches are on upwards-facing surfaces, but if I've got my geometry right the arrowed ones are on vertical or even slightly downwards-facing surfaces.

Click to view attachment

Glad the location from which the spring fell has been identified.

It would've only been a matter of time before Hoaxland declared it as 'evidence'

It would've only been a matter of time before Hoaxland declared it as 'evidence'

...Occam's Razor fails on Mars because our simplest explanations are based upon terrestrial experience.

I suspect that what you have in mind is not Occam's Razor, but what is sometimes called the "Rosenthal effect" (or experimenter expectancy effect) named for psychology Prof. Robert Rosenthal, recently honored for "groundbreaking research into experimenter bias and self-fulfilling prophecy": that is, for proving that most people see only what they expect to see, and that their expectations influence the outcome of supposedly unbiased experiments and trials (in the social sciences and in jury trials, at least). Based on personal experience, when it comes to data-deficient Mars this effect is hardly restricted to the social sciences, natural scientists being people too, and that's what you seem to be saying also (i.e., if we expect Mars to be analogous to Earth, then we will interpret it using terrestrial experience). See, e.g.,

http://www.newsroom.ucr.edu/cgi-bin/display.cgi?id=1752

or http://www.encyclopedia.com/doc/1O87-exper...ectncyffct.html

Still, ice seems the most likely explanation for that patch, with salts a close second, and silica or some other substance way down there, at least for the moment (keeping in mind that combinations are permitted too). Getting the data could end that argument!

-- HDP Don

Well, what result did Dr. Rosenthal expect?

"Rosenthal Effect"...thanks, Don!  I've long suspected that such a thing might exist, and our experience in planetary exploration seems to prove it in spades. We are often surprised that the Solar System isn't what we expected it to be, and we shouldn't be.

I've long suspected that such a thing might exist, and our experience in planetary exploration seems to prove it in spades. We are often surprised that the Solar System isn't what we expected it to be, and we shouldn't be.

Suggest a new mantra to be chanted everytime we see a new piece of data from UMSF: "It's an alien world, it's an alien world..."

Suggest a new mantra to be chanted everytime we see a new piece of data from UMSF: "It's an alien world, it's an alien world..."

Well, what result did Dr. Rosenthal expect?

Congratulations, Oersted and EGD and nprev, for spotting that recursive paradox, one that was considered early on (and that Dr. Rosenthal apparently tried to alleviate by effectively isolating himself from the actual experiments). So, is Dr. Rosenthal telling us that the canals on Mars are really there after all, and that it's simply our modern expectations that aren't up to snuff??

-- HDP Don

Just looked recursion up in my dictionary:

Recursion, see Recursion

Recursion, see Recursion

Very interesting traduction in French = récursion

Just looked recursion up in my dictionary: Recursion, see Recursion

Thanks for that, which famously gets the basic idea across (lots of well-informed and clever people on this forum). Actually some of the dictionary definitions for recursive are worse, but I just meant the simplest: defined in terms of itself. As an example of a similar paradox, Paul Knauth told me that at the beginning of the semester, he likes to ask in class for a show of hands by "those students who never raise their hands in class".

With regard to Prof. Rosenthal and experimenter expectancy, I should have mentioned that modern social/medical scientists habitually engage in what is called double-blind testing. This means that neither the tester nor the subject know who is receiving say, the Coke or the Pepsi in taste testing. Similarly, in drug trials, neither the physicians nor the patients (or the rats, in animal testing) know who is receiving what - the new treatment, the standard treatment, or the placebo (cf. the famous placebo effect). In other words, zero special expectations by design. NASA seems never to have considered such a careful protocol for Mars, possibly because Mars the planet is neither a person nor an animal (although the story of the blind men examining the elephant comes to mind). Nevertheless, as implied in the post that started this commentary, when you are told to "follow the water" and are confidently expecting to find ice where you land, might this bias your thinking about the bright patch under the lander, or about other bright patches?

Another example might be if Phoenix identifies some (long-sought) carbon compounds. Our expectations regarding these as indicators of life might blind us to the fact that carbonaceous chondrites (a common type of meteorite) have been delivering copious quantities of organic compounds to the surface of Mars for billions of years. Although the scientists might be properly cautious about the significance of such an ambiguous discovery, the news media would probably be much less so (I can see the headlines now). The ultimate victims of the Rosenthal effect might then be the reporters (and thus the public), whose extremely high expectations for science itself could lead them astray.

Being well-informed and clever,

-- HDP Don

I will point out that tests to make general-to-specific characterizations of large, complex systems don't lend themselves well to double-blind testing concepts. I mean, would you have the MERs bring along a suite of various rock types with them to Mars, selected by a group of people who have no communication with the PIs, and have every measurement taken on Mars include this test suite, with the PIs not being informed of which set of results belonged to native Martian rock and which to the terrestrial samples?

As you see, the specific double-blind process doesn't lend itself to the work at hand. Not that I don't see a need for some way to try and reduce the Rosenthal effect.

That said, what I note strongly in the process of designing science payloads for planetary probes is that it seems to reward those who have developed very detailed models of their expected findings, and have thence designed their instruments to most effectively collect the expected data.

It seems as if any experiment proposal that includes the phrase, in any form, "We don't know what we'll find" is automatically rejected because of the possibility that, by not meeting some preselected expectation, the experiment runs a high risk of being viewed as a "failure."

That's a process that not only allows a fair amount of the Rosenthal effect, it fairly demands it. When you design your instruments to show you only what you expect to see, it's awfully hard to see those things that *are* there that you never expected.

One of the worst examples of this effect, I think, was the life detection suite aboard the Viking landers. They were designed to say Yes or No to a very specific (and very terrestrial) set of life-bounded conditions, so the PIs didn't look closely enough at what Maybe results might mean, or how they might be interpreted.

I think the worst unflown example of this effect would have to be a contender for the 2001 lander program who, if I'm remembering the details from Squyres' "Roving Mars" correctly, wanted to devote an entire science payload to positively identifying amino acids within the Martian regolith. That would have been a good portion of a billion dollars to answer what is probably not *nearly* the most useful question to be asking.

The spacecraft that suffered the least from this effect? IMHO, at least for fairly recent probes, I would say Stardust. Yes, the designers of the Stardust collectors had to make some assumptions about the size of the particles they were going for, and the density of particles in their collection location. But the whole point of Stardust was "Let's go grab some comet dust, bring it back, and then see whatever we see when we get our hands on it." That mission design, since it brought samples back to where a great multitude of tests could be run on them as appropriate, was able to follow a more simple paradigm of "grab what you can and then see what you've got."

It seems to me, though, that until we can bring samples back and have the luxury of running whatever tests on them that seem appropriate (to answer all the new questions that the the initial test results pose), you have to narrow your data collection based on some form of triage theory. You can't fly all of the tools you want to fly that would truly enable you to just follow up on what you find rather than looking for what you expect. That's a given, considering mass budgets and funding budgets.

So, you *have* to narrow the focus to what you can afford to place in situ. Granted, the current process encourages that narrowing more than it should... but I'm not coming up with any good ideas on how to change the process to reduce the Rosenthal effect.

-the other Doug

As you see, the specific double-blind process doesn't lend itself to the work at hand. Not that I don't see a need for some way to try and reduce the Rosenthal effect.

That said, what I note strongly in the process of designing science payloads for planetary probes is that it seems to reward those who have developed very detailed models of their expected findings, and have thence designed their instruments to most effectively collect the expected data.

It seems as if any experiment proposal that includes the phrase, in any form, "We don't know what we'll find" is automatically rejected because of the possibility that, by not meeting some preselected expectation, the experiment runs a high risk of being viewed as a "failure."

That's a process that not only allows a fair amount of the Rosenthal effect, it fairly demands it. When you design your instruments to show you only what you expect to see, it's awfully hard to see those things that *are* there that you never expected.

One of the worst examples of this effect, I think, was the life detection suite aboard the Viking landers. They were designed to say Yes or No to a very specific (and very terrestrial) set of life-bounded conditions, so the PIs didn't look closely enough at what Maybe results might mean, or how they might be interpreted.

I think the worst unflown example of this effect would have to be a contender for the 2001 lander program who, if I'm remembering the details from Squyres' "Roving Mars" correctly, wanted to devote an entire science payload to positively identifying amino acids within the Martian regolith. That would have been a good portion of a billion dollars to answer what is probably not *nearly* the most useful question to be asking.

The spacecraft that suffered the least from this effect? IMHO, at least for fairly recent probes, I would say Stardust. Yes, the designers of the Stardust collectors had to make some assumptions about the size of the particles they were going for, and the density of particles in their collection location. But the whole point of Stardust was "Let's go grab some comet dust, bring it back, and then see whatever we see when we get our hands on it." That mission design, since it brought samples back to where a great multitude of tests could be run on them as appropriate, was able to follow a more simple paradigm of "grab what you can and then see what you've got."

It seems to me, though, that until we can bring samples back and have the luxury of running whatever tests on them that seem appropriate (to answer all the new questions that the the initial test results pose), you have to narrow your data collection based on some form of triage theory. You can't fly all of the tools you want to fly that would truly enable you to just follow up on what you find rather than looking for what you expect. That's a given, considering mass budgets and funding budgets.

So, you *have* to narrow the focus to what you can afford to place in situ. Granted, the current process encourages that narrowing more than it should... but I'm not coming up with any good ideas on how to change the process to reduce the Rosenthal effect.

-the other Doug

Great post, oDoug!

That's exactly why I like the basic question that Phoenix is trying to answer: "Are there organic compounds on the surface of Mars in this locale?" That's constrained well enough to answer with the equipment available without making a whole bunch of other assumptions...good, focused science.

No disrespect to the Viking experiments intended, BTW. They were extremely audacious, but as Doug said there really wasn't very much interpretation space available for "maybe" results. We just don't know enough about both organic and inorganic chemistry in non-terrestrial conditions to draw definitive conclusions from any experiment designed to detect indirect evidence of biological activity.

That's exactly why I like the basic question that Phoenix is trying to answer: "Are there organic compounds on the surface of Mars in this locale?" That's constrained well enough to answer with the equipment available without making a whole bunch of other assumptions...good, focused science.

No disrespect to the Viking experiments intended, BTW. They were extremely audacious, but as Doug said there really wasn't very much interpretation space available for "maybe" results. We just don't know enough about both organic and inorganic chemistry in non-terrestrial conditions to draw definitive conclusions from any experiment designed to detect indirect evidence of biological activity.

This is a "lo-fi" version of our main content. To view the full version with more information, formatting and images, please click here.