Full Version: Juno perijove 29

Mike, what is JNCE_2020259_29C00004_V01 testing?

I thought that I should post a handful or two of pretty pictures for your and my recreation:

PJ29, #14

Click to view attachment Click to view attachment

#19:

Click to view attachment

#20

Click to view attachment

PJ29, #14

Click to view attachment Click to view attachment

#19:

Click to view attachment

#20

Click to view attachment

Those images are post-processed examples of these reprojections.

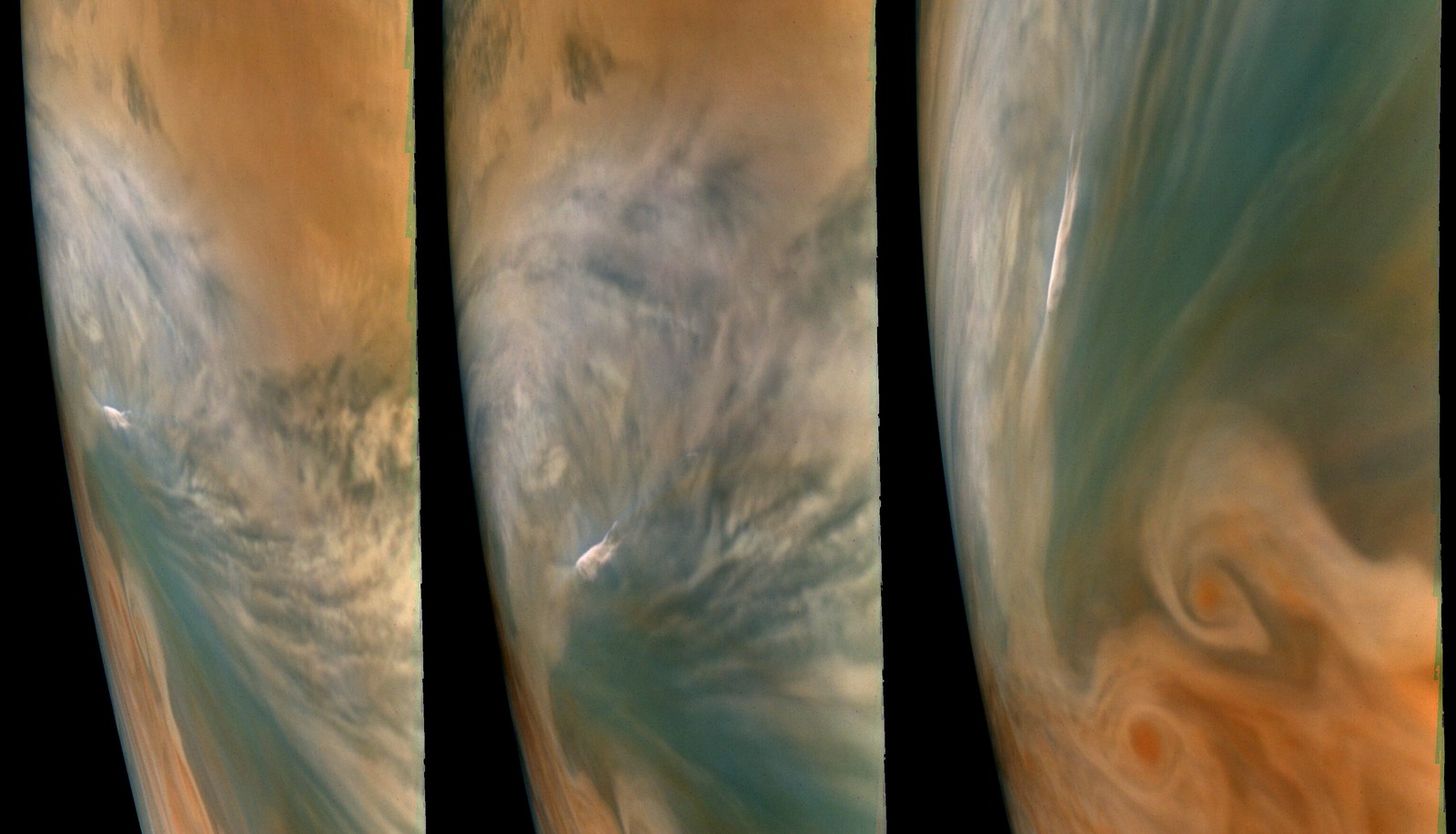

Cool bright spot in PJ29_32/34/35

Jupiter PJ29_32/34/35 Interesting Bright Spot 3-views Exaggerated Color/Contrast by bswift, on Flickr

Jupiter PJ29_32/34/35 Interesting Bright Spot 3-views Exaggerated Color/Contrast by bswift, on Flickr

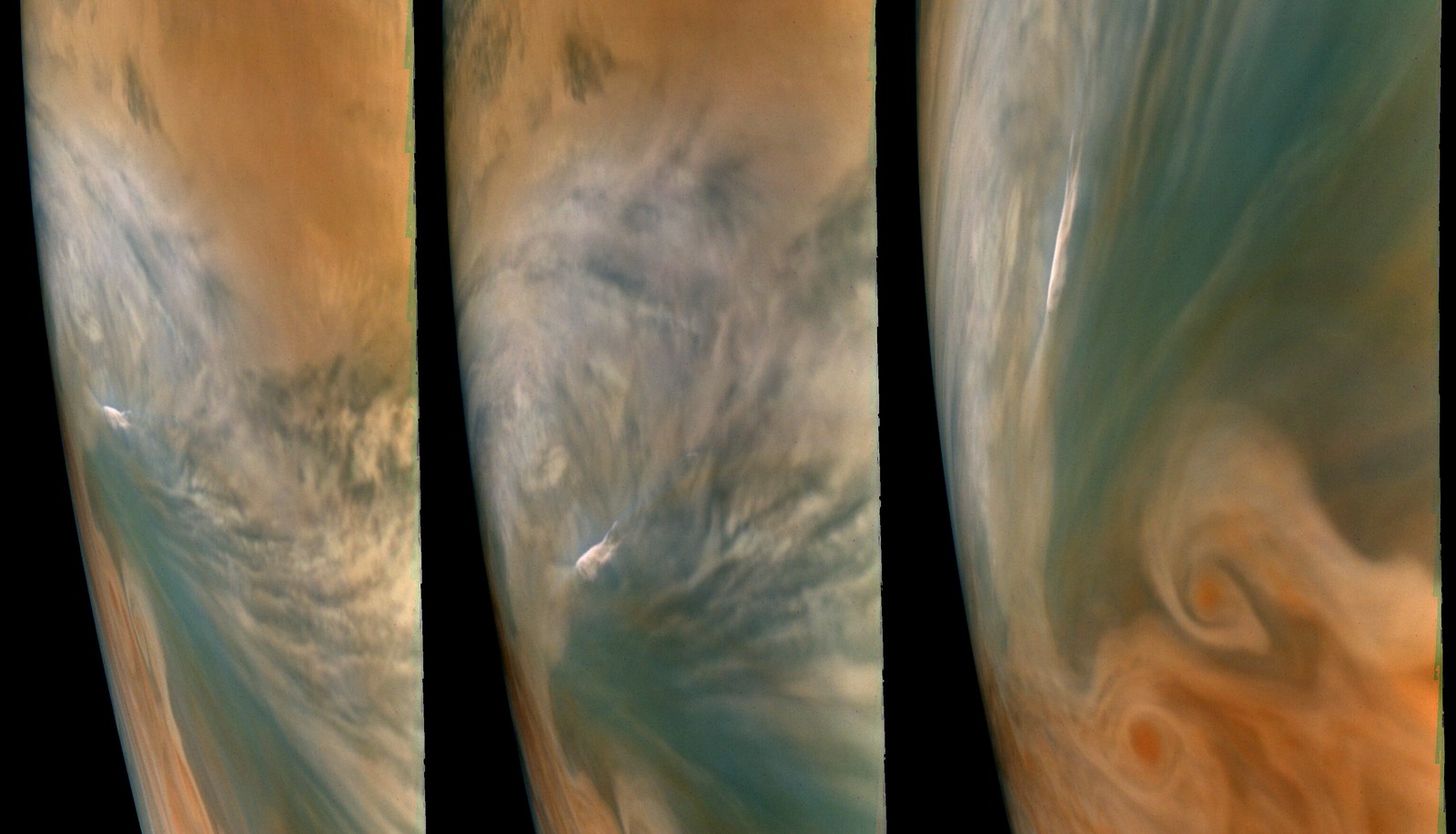

Some initial PJ29 detail images. With the perijoves becoming more northerly, we're getting clearer images of the northern filamentary region, though at the expense of sharp southern views.

I'm a little disappointed with the color I'm getting in the Northern latitudes, though my pipeline tends to white-balance the color channels, which I'm usually ok with (I'll leave the amazing true-color work to Bjorn)...

Jupiter - PJ29-20 - Detail

Jupiter - PJ29-24 - Detail

Jupiter - PJ29-26 - Detail

Jupiter - PJ29-23 - Detail

Jupiter - PJ29-27/28 - Detail

Jupiter - PJ29-43/44/46 - Map Projected

I'm particularly interested in this kidney bean shaped feature here:

Jupiter - PJ29-46/48 - Map Projected

I'm a little disappointed with the color I'm getting in the Northern latitudes, though my pipeline tends to white-balance the color channels, which I'm usually ok with (I'll leave the amazing true-color work to Bjorn)...

Jupiter - PJ29-20 - Detail

Jupiter - PJ29-24 - Detail

Jupiter - PJ29-26 - Detail

Jupiter - PJ29-23 - Detail

Jupiter - PJ29-27/28 - Detail

Jupiter - PJ29-43/44/46 - Map Projected

I'm particularly interested in this kidney bean shaped feature here:

Jupiter - PJ29-46/48 - Map Projected

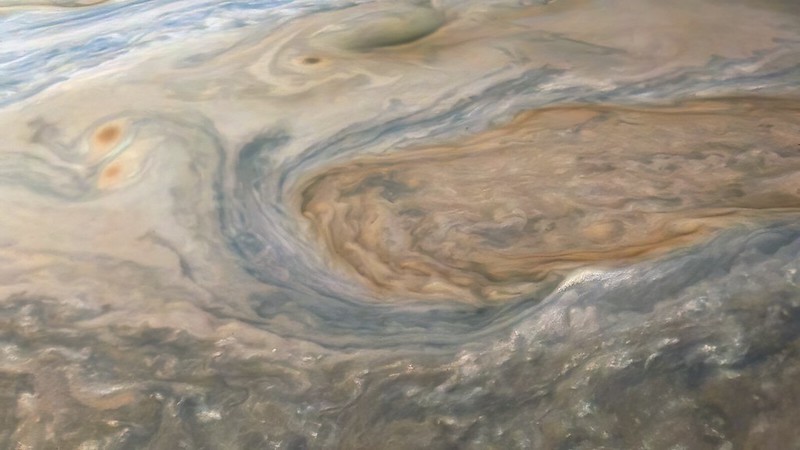

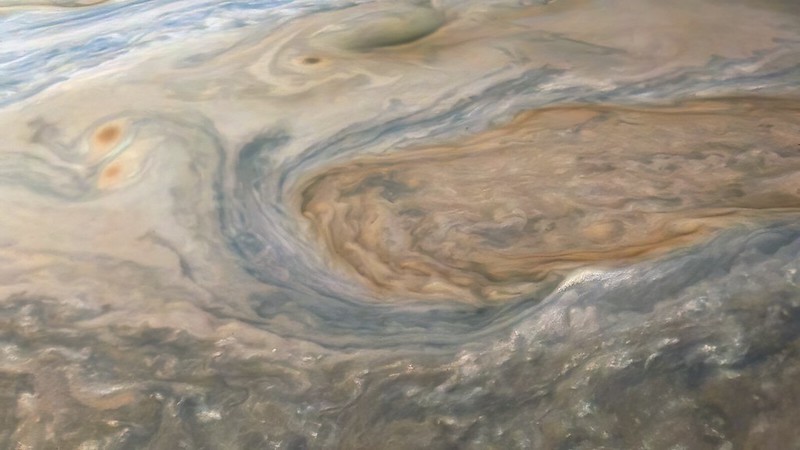

And two fish-eye 'marble' composites so far:

Jupiter - Perijove 29 - Composite - 1

Jupiter - Perijove 29 - Composite

Jupiter - Perijove 29 - Composite - 1

Jupiter - Perijove 29 - Composite

Beautiful work Kevin, the Jupiter 'marbles' are my favorite Juno compositions.

...I'm a little disappointed with the color I'm getting in the Northern latitudes, though my pipeline tends to white-balance the color channels, which I'm usually ok with (I'll leave the amazing true-color work to

I've been getting color in the northern PJ29 images that I consider a bit suspicious. I'm therefore going to revisit some of the color tests/calibrations and among other things compare the PJ29 color to earlier images. So I'm very curious to know what you are disappointed with. Does your pipeline white-balance the images/color channels differently in different images or do you multiply the R/G/B values with the same fixed values for all of the images?

My process uses the same values and conversions for every image, such that I can output close to true color on the output side if I wanted to. But I tend to just use a proportional stretching method when I convert from the ISIS3 cubes to tifs which in effect white balances them (though not on any specific value white). I've done rather little to validate the 'true color' output, so I don't really use it.

I've been getting color in the northern PJ29 images that I consider a bit suspicious. I'm therefore going to revisit some of the color tests/calibrations and among other things compare the PJ29 color to earlier images. So I'm very curious to know what you are disappointed with. Does your pipeline white-balance the images/color channels differently in different images or do you multiply the R/G/B values with the same fixed values for all of the images?

Here is a list of links to intermediate PJ29 renditions I've uploaded thus far.

I might update that link list occasionally if time allows, probably also with links to other PJs.

I might update that link list occasionally if time allows, probably also with links to other PJs.

This is a "lo-fi" version of our main content. To view the full version with more information, formatting and images, please click here.